Last time when SAP

released an on premise version of SAP ABAP Platform/NetWeaver developer edition

was on Feb 15th 2021. It was a version called SAP

ABAP Platform 1909, Developer Edition and it was provided as a Docker

image allowing developers run it on its own machines as a container. That

edition was available till Dec 2021 when Log4J

vulnerability was revealed. The ABAP Platform

1909 became a victim of Log4J as many other software due to fact that software

vendors decided to pull it off till the vulnerability is not properly

addressed. SAP took a time a decide on the fate of the ABAP Platform 1909

container image. Initially the decision was scheduled to be announced on January

10th 2022. That was delayed till mid of Oct 2022 (23th of Oct) when SAP

announced that “SAP’s product and delivery standards have evolved…”. Basically the

statement said that SAP is preferring cloud versions over something that

independed developers can install by themselves. And, in fact meanwhile a CAL

versions were provided. Anyhow, this move sparked quite an discussion and criticism (e.g. here (363 comments), or here)

within SAP developers community. Following all that an idea

was created on Dec 12th 2022 on SAP influence page. It got an

attention of 278 voters. After approximately 7 months the idea status moved

suddenly to Delivered. Via blog

SAP announced that docker based version of ABAP Server is back. This is it is

again ABAP Platform Trial 1919 by slightly higher Service Pack version – 07.

How to make it run?

Just follow instructions at docker hub page.

All you need is to have a machine with a lot of memory (32+ GB), DockerHub account,

and if you are on WINDOWS OS – a Docker Desktop. Linux

and MacOS is supported as well. Once you get the Docker Desktop up and running

fire up a below command to pull the image from the hub to yoru machine:

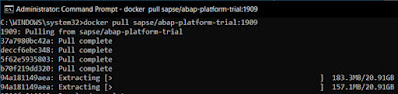

docker pull

sapse/abap-platform-trial:1909

After 30min or so

(depending on speed of internet connection), image extraction part starts that

created a new contained in your Docker:

Once it is

finished start the container with below command:

docker run --stop-timeout

3600 -i --name a4h -h vhcala4hci -p 3200:3200 -p 3300:3300 -p 8443:8443 -p

30213:30213 -p 50000:50000 -p 50001:50001 sapse/abap-platform-trial:1909

-skip-limits-check

Once

you get a message in the command prompt saying “*** Have fun! ***” the ABAP trial is up and

running.

To stop it just hit

CTRL+C in the terminal window which started it. There will be logs like below

popping up:

Interrupted by user

My termination has been requested

Stopping services

Terminating -> Worker Processes (2919)

..

Finally passing away ...

Good Bye!

To start it again run

the container (aka regular start) with below command:

docker start -ai a4h

In

my case as I run the Docker on WSL2 (there are different engines available to

power it) I faced an issue during first attempt to run the container. It hanged

“HDB: starting” and

not going anywhere for couple of hours.

WARNING:

the following system limits are below recommended values:

(sysctl kernel.shmmni = 4096) < 32768

(sysctl vm.max_map_count = 65530) <

2147483647

(sysctl fs.file-max = 2668219) < 20000000

(sysctl fs.aio-max-nr = 65536) <

18446744073709551615

Hint:

consider adding these parameters to your docker run command:

--sysctl kernel.shmmni=32768

Hint:

if you are on Linux, consider running the following system commands:

sudo sysctl vm.max_map_count=2147483647

sudo sysctl fs.file-max=20000000

sudo sysctl

fs.aio-max-nr=18446744073709551615

sapinit:

starting

start

hostcontrol using profile /usr/sap/hostctrl/exe/host_profile

Impromptu

CCC initialization by 'rscpCInit'.

See SAP note 1266393.

Impromptu

CCC initialization by 'rscpCInit'.

See SAP note 1266393.

sapinit:

started, pid=14

HDB:

starting

This

is due to fact that in WSL2 case there is no sizing options. WSL2 has an

in-built dynamic memory and CPU allocation feature that means that the Docker

can utilize only the required memory and CPU. But as there are other processes

in WIN OS that need an OS resources too it started to be a problem because WSL2

consumed all available memory. This can be solved by editing a .wslconfig file located in WIN’s USERPROFILE

folder. I altered the file as below:

[wsl2]

memory=26GB # Limits memory to docker in WSL

processors=5 # Limits no processors

Other option than to .wslconfig

file modification is to start the docker image deployment with addition to

following parameters (on bold) of “docker run” command.

docker run --stop-timeout 3600 -i

--name a4h -h vhcala4hci -p 3200:3200 -p 3300:3300 -p 8443:8443 -p 30213:30213

-p 50000:50000 -p 50001:50001 -m 26g --cpus 5 sapse/abap-platform-trial:1909

-skip-limits-check

If you can’t

get your container up and running there are couple of things to be checked with

the respect to HANA DB:

/usr/sap/HDB/HDB02/vhcala4hci/trace

/usr/sap/HDB/HDB02/vhcala4hci/trace/DB_HDB

You may need to review

log files in those folder. In most cases it will be an issue of lack of

operating memory or hard drive space.

For extending

the licenses of either ABAP Platform (AS ABAP) or HANA DB see my post here.

One

more thing to clarify is a naming convention. Difference between SAP NetWeaver

AS ABAP Developer Edition and SAP ABAP Platform Trial. One part is that SAP has

shifted away from

the NetWeaver to ABAP Platform. Technology wise the ABAP trial is delivered

as Docker container whereas Netweaver Developer Edition are based on Virtual

Machine software like Oracle Virtual Box.

In

closing I must say that I really appreciate an effort all of the people who

made this great distribution of ABAP Platform available again. I especially

appreciate that SAP made a commitment to deliver also feature releases release

(version 2023, 2025 and 2027 according to the release strategy for SAP

S/4HANA). Even more they plan to release the ABAP Platform Trial subsequently

whenever there is a new SP update. Thanks again for that!

More

information:

Power

up your SAP NetWeaver Application Server ABAP Developer edition

SAP

ABAP Platform 1909, Developer Edition – installation on WINDOWS OS